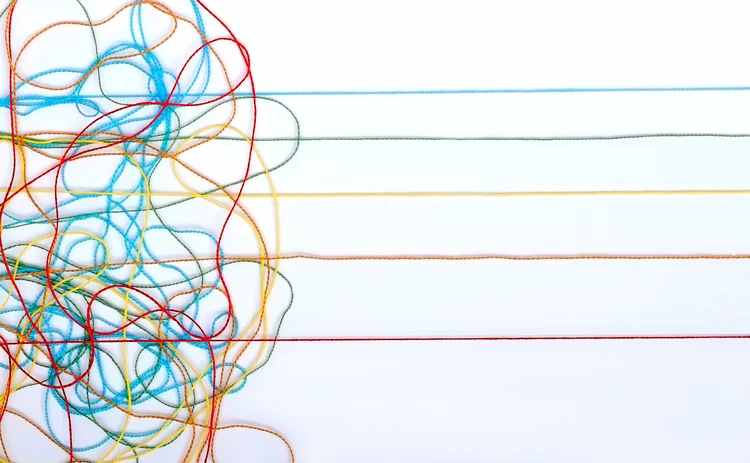

Quants see promise in DeBerta’s untangled reading

Improved language models are able to grasp context better

Context, as they say, is everything – which is a big problem for investors when they try to use so-called large language models to weigh the sentiment of financial news. The models are notorious for misreading terms that could be either good or bad depending on what’s being talked about at the time.

A few methods have been tried to solve the problem, mostly using the idea that models can look at

コンテンツを印刷またはコピーできるのは、有料の購読契約を結んでいるユーザー、または法人購読契約の一員であるユーザーのみです。

これらのオプションやその他の購読特典を利用するには、info@risk.net にお問い合わせいただくか、こちらの購読オプションをご覧ください: http://subscriptions.risk.net/subscribe

現在、このコンテンツを印刷することはできません。詳しくはinfo@risk.netまでお問い合わせください。

現在、このコンテンツをコピーすることはできません。詳しくはinfo@risk.netまでお問い合わせください。

Copyright インフォプロ・デジタル・リミテッド.無断複写・転載を禁じます。

当社の利用規約、https://www.infopro-digital.com/terms-and-conditions/subscriptions/(ポイント2.4)に記載されているように、印刷は1部のみです。

追加の権利を購入したい場合は、info@risk.netまで電子メールでご連絡ください。

Copyright インフォプロ・デジタル・リミテッド.無断複写・転載を禁じます。

このコンテンツは、当社の記事ツールを使用して共有することができます。当社の利用規約、https://www.infopro-digital.com/terms-and-conditions/subscriptions/(第2.4項)に概説されているように、認定ユーザーは、個人的な使用のために資料のコピーを1部のみ作成することができます。また、2.5項の制限にも従わなければなりません。

追加権利の購入をご希望の場合は、info@risk.netまで電子メールでご連絡ください。

詳細はこちら 我々の見解

Can AI be the great equaliser in e-FX?

FX market-makers see real benefits for agentic AI in code generation and data analysis

モデル・リスク・マネージャーの孤独

取締役会は、それらをイノベーションの足かせと見なすかもしれません。リスク管理部門は、効率性を重視していることを示す必要があります

複雑なボラティリティ曲面へのスムーズフィット

Quantは、オプティマイザーを用いたインプライド・ボラティリティの新たな捕捉手法を示しています。

マレックスの急成長を支える「中毒性のある」働き方

スタッフの皆様には、何が効果的で何がそうでないかを把握するため、数多くの小さな実験を積極的に行っていただくようお勧めしております。

トランプ氏の最新の「真実」が伝統的金融業界を不安にさせる理由

ウォール街はトランプ氏のクリプト映画の中の悪役となりつつあります

ファニーメイとフレディマックによる住宅ローン買い入れが金利上昇を招く可能性は低い

9兆ドル規模の市場において2,000億ドルのMBSを追加しても、従来のヘッジ戦略を復活させることはできません。

2025年の影響度合い:デリバティブ価格設定が主導的役割を担い、クオンツはAIの群れに追随しない

金利とボラティリティのモデリング、ならびに取引執行は、クオンツの優先事項の最上位に位置しております。

株式には、投資家が見落としている可能性のある「賭け要素」が存在する

投機的取引は、対象となる株式によって異なる形で、暗号資産と株式市場との間に連動関係を生み出します。