In defence of Coso

The recent methodological attack on Coso is at best misinformed and at worst irresponsible, say Peyman Mestchian, Mikhail Makarov and Bahram Mirzai

Operational risk management is an old discipline that has gained fresh impetus from Basel II. To advance it further as a recognised and respected risk management discipline requires:

• A framework for operational risk management together with a common language across the industry;

• A set of appropriate risk management techniques and tools;

• A thorough understanding of business processes.

The first two requirements are generic and one can, therefore, expect methods developed for enterprise risk management to be applicable. Many institutions have considered applying the Committee for Sponsoring Organizations of the Treadway Commission (Coso) framework to operational risk management.

The Coso approach is described in papers one and two of Enterprise Risk Management - Integrated Framework (Coso, 2004a and 2004b). The first paper - the Framework - outlines an integrated approach to enterprise risk management and the second paper - Technical Application - provides an overview of the methods and techniques used in enterprise risk management.

The application of the Coso framework to operational risk has been criticised recently by Ali Samad-Khan (2005). We believe that although the effectiveness of the Coso framework for operational risk remains to be demonstrated in practice, the argument put forward by Samad-Khan is at best misinformed and at worst irresponsible.

Samad-Khan's argument is misinformed because its primary focus on unexpected loss defies the very principles of the Basel Accord, that is, the promotion of risk governance, risk management: identification, assessment, monitoring and control/mitigation, and risk disclosure (Basel Committee, 2003).

Operational risk is defined by the Basel Committee as "the risk of loss resulting from inadequate or failed business processes, people and systems or from external events." Unexpected loss relates primarily to capital adequacy under Pillar I of the Accord. However, there is more to risk management than capital adequacy. Consequently, Pillar II stresses the importance of a sound system of internal control and a sound corporate governance structure. It is through Coso-type risk assessment that different risk management needs can be aligned and integrated within one framework.

A paper presented to the Institute of Actuaries last year, Quantifying Operational Risk in General Insurance Companies (Giro, 2004), moves away from the purely statistical approaches that have traditionally been applied in this area and concludes: "Whilst not purely strictly actuarial in some past senses of the word, this [operational risk management] means beginning by identifying, assessing and understanding operational risk, and being able to view various forms of control as important, as well as understanding their impact - all before using statistical measurement techniques. This requires insight into, and understanding of process management, organisational design including defining roles and responsibilities, occupational psychology and general management. The actuarial analytic training is good grounding for such work, but by no means a passport to success."

'Reverse engineering' some high-profile operational risk failures - such as Barings, BCCI or Enron - demonstrates why Samad-Khan's argument is irresponsible. If such companies had deployed a sound and integrated risk management framework (as outlined in Coso, 2004a and 2004b, Basel Committee, 2003) the failures that beset them could have been avoided entirely or, at a minimum, discovered earlier. Such a 'reverse engineering' also underlines another way in which this criticism is irresponsible: the final major impact from these failures was the result of a complex chain of smaller events and control failures that would normally be identified by Coso-type risk assessments. In fact, it is the study of such major failures that has resulted in Coso-based regulations, such as Sarbanes-Oxley, and risk-based auditing, which is at the heart of most modern financial auditing standards. History has shown that it is dangerous to ignore such evidence, both from a methodological and a legal point of view.

Risk managers have used Coso-based assessment for many years, in both the financial and non-financial sectors. The UK's Financial Services Authority, for example, states in Consultation Paper 142: "A key issue is operational risk measurement. Due to both data limitations and lack of high-powered analysis tools, a number of op risks cannot be measured accurately in a quantitative manner at the present time. So we use the term risk assessment in place of measurement, to encompass more qualitative processes, including for example the scoring of risks as 'high', 'medium' and 'low'. However, we would still encourage firms to collect data on their operational risks and to use measurement tools where this is possible and appropriate. We believe that using a combination of both quantitative and qualitative tools is the best approach to understanding the significance of a firm's operational risks."

Samad-Khan's (2005) argument is based on four main points:

• The definition of the risk used by Coso is flawed.

• A likelihood-impact risk assessment is flawed.

• The methods prescribed by Coso are highly subjective, and only risk assessment based on historic losses is valid.

• Risk assessment under the Coso approach is too complex and resource-intense.

In the following section we comment on each of these points in detail.

Definition of risk

The argument that the definition of risk is flawed is based on the equation:

Risk = likelihood × impact

There is no reference in either Coso publication (2004a, 2004b) that this formula should be used as a measure of risk. On the contrary, the Coso framework suggests the use of value-at-risk or capital-at-risk.

Samad-Khan's discussion of expected and unexpected loss may also lead to a misconception that only unexpected loss is important when managing op risk. According to such a view, a $100 million loss in credit card fraud that occurs every year should not be considered for risk assessment because it has an expected loss contribution of $100 million and an unexpected loss contribution of zero.

In reality, the important quantity is the cost of risk, expressed as:

Cost of risk = expected loss + cost of capital

In other words, the cost of risk (COR) is the sum of the expected loss and the cost of capital required to cover the unexpected loss.

To illustrate the application of this formula, consider an example: Suppose a bank has on average $300 million of op risk losses a year and holds $1.5 billion of capital to cover the unexpected losses. Assuming that the cost of the capital for the bank is 5%, the COR becomes:

Cost of risk = 300 + 5% × 1500 = $375 million

If an organisation is to perform cost-benefit analysis within an integrated operational risk management framework, evaluation of the COR is crucial because an organisation's willingness to take a risk will depend on whether or not the COR justifies the anticipated returns. As the example shows, expected loss can play a dominant role in the analysis of the COR.

For most risks, the unexpected loss will only have a small impact on COR. Expected loss will be the main driver. Cost of capital will predominate only in the case of rare and severe-impact risks.

Consequently, the largest part of any reduction in the COR will flow from a reduction in the expected loss. In this respect, a Coso-type framework that focuses on the management of rare risks and common risks will prove useful.

Likelihood-impact based risk assessment

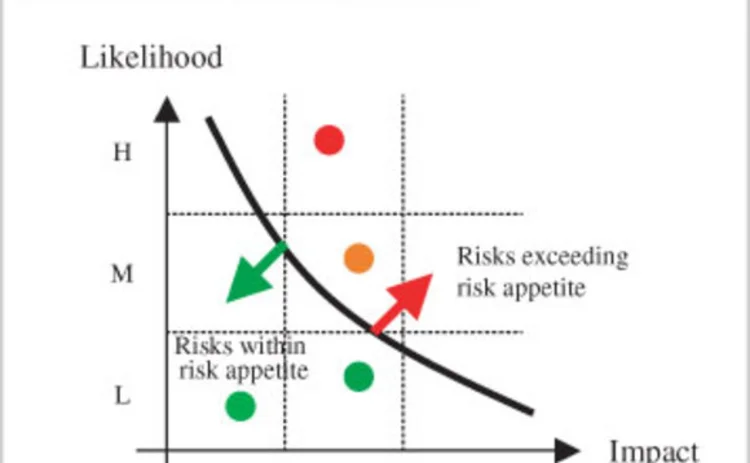

One approach considered in the Coso framework is the likelihood-impact assessment. This was originally introduced in MIL STD 882A - a military system safety standard created by the US Department of Defense. This document has been widely and successfully used by risk and safety practitioners since its introduction in 1977. It sets out an approach that maps different risks into a matrix similar to the one shown in figure 1.

According to this approach, for each risk the frequency of occurrence (likelihood) and the worst credible outcome (impact) are assessed and captured in a likelihood-impact matrix. The matrix is then compared with a risk appetite map, which outlines the maximum level of adverse risk outcome that an organisation is willing to accept. As a result of this comparison, any significant risk exceeding the risk appetite will call for management action. The matrix helps with risk assessment and provides a visual representation of risk.

Samad-Khan's criticism of the likelihood-impact approach is based on a misunderstanding. When likelihood-impact assessment is used to check whether or not a risk exceeds the risk appetite levels, it is sufficient to estimate only frequency and worst outcomes of the risks. But when a comprehensive risk assessment is required, one needs to estimate likelihood and impact for several outcomes of the risk. Risk assessment is often not performed in terms of distributions. More typically, the results of a risk assessment are translated into severity and frequency distributions. A corporate credit rating is a well-known example of a risk assessment where the outcome of the assessment is translated into frequency and severity distributions.

For example, in credit risk assessment one can estimate frequency of losses (PD in credit terminology), expected impact (LGD in credit terminology) and worst credible impact (EAD in credit terminology). Clearly, the results of such a risk assessment can be translated into frequency and severity distributions.

Subjective versus statistical risk assessment

It is interesting to observe the degree of antagonism between 'business experts' and 'statisticians'. Business experts insist on the use of subjective risk assessment and argue that reliance on statistical analysis is like driving a car while only looking in the rear-view mirror. Statisticians, on the other hand, argue that subjective assessment is like predicting the future with a crystal ball.

Modern risk management frameworks, such as Basel II, Coso or MIL STD 882A, require integrated approaches that combine both subjective and data-driven risk assessment. The weight assigned to each approach is dependent on the degree of confidence given to each set of information.

The need to use both approaches is particularly clear when assessing rare risks that have an extreme impact, such as the attacks on the World Trade Center in New York or the South-east Asian tsunami. Clearly when assessing terrorism risk one should take into account the terrorism alert levels issued by the US government, even if they incorporate subjective judgement.

Organisations that are successful at risk management adopt a balanced and complementary approach, one that uses both subjective and data-driven risk assessment methods. Figure 2 describes some examples of quantitative and qualitative techniques that are applicable to operational risk management.

Complexity of assessment process

It is misleading to argue that Coso-type risk assessment is too complex and resource-intensive. There are many examples of organisations that have applied such approaches with great success. For example, the pioneering work embodied in MIL STD 882A has been incorporated into system safety standards used in the chemical processing industry (US Environmental Protection Agency's 40CFR68) and the medical device industry (the US Food and Drug Administration's requirements for pre-market notification). The semi-conductor manufacturing and the nuclear power industries use many techniques to analyse system safety during the design of production processes, equipment and facilities. They do so because the cost of 'mistakes' is enormous in terms of production capability, product quality and, ultimately, human life.

Obviously, the aim of operational risk management is not simply to gain a more accurate measurement of risk. It seeks to reduce operational losses and the overall cost of operational risk. For most financial institutions, a reduction of expected loss in the order of 10% would justify the risk assessment process and cover the cost of the resources involved. We believe that the development of op risk frameworks, tools, and management techniques that help firms to reduce their losses from op risks will remain a key priority beyond the implementation deadlines of Basel II.

Conclusion

We strongly believe that, ultimately, the primary goal of operational risk management should be business success and value creation. These goals are more important than the fear of failing compliance tests or of not ensuring capital adequacy. Such motivators are vital, but secondary.

Operational risk practitioners should be wary of specialists who are dogmatic in their approach. Ultimately, if the only tool in your toolbox is a hammer, every problem will start to look like a nail. As the subject continues to evolve, the exclusion of any specific approach or framework is bound to result in a flawed and narrow-minded solution. Operational risk management requires practitioners who have open minds, are able to learn from others, and are willing to be flexible and explore other methodologies. OpRisk

Peyman Mestchian is head of the risk intelligence practice at SAS EMEA. Bahram Mirzai and Mikhail Makarov are managing partners at EVMTech, a company specialising in operational risk. Email: peyman.mestchian@suk.sas.com, bmirzai@evmtech.com, mmakarov@evmtech.com

REFERENCES Basel Committee on Banking Supervision, 2003 Sound practices for the management and supervision of operational risk February 2003 Committee of Sponsoring Organizations of the Treadway Commission, 2004a Enterprise risk management - integrated framework: executive summary and framework Committee of Sponsoring Organizations of the Treadway Commission, 2004b Enterprise risk management - integrated framework: technical applications Giro working party, 2004 Quantifying operational risk in general insurance companies Presented to the Institute of Actuaries, March Samad-Khan A, 2005 Why Coso is flawed? Operational Risk, January, pages 24-28 |

Only users who have a paid subscription or are part of a corporate subscription are able to print or copy content.

To access these options, along with all other subscription benefits, please contact info@risk.net or view our subscription options here: http://subscriptions.risk.net/subscribe

You are currently unable to print this content. Please contact info@risk.net to find out more.

You are currently unable to copy this content. Please contact info@risk.net to find out more.

Copyright Infopro Digital Limited. All rights reserved.

As outlined in our terms and conditions, https://www.infopro-digital.com/terms-and-conditions/subscriptions/ (point 2.4), printing is limited to a single copy.

If you would like to purchase additional rights please email info@risk.net

Copyright Infopro Digital Limited. All rights reserved.

You may share this content using our article tools. As outlined in our terms and conditions, https://www.infopro-digital.com/terms-and-conditions/subscriptions/ (clause 2.4), an Authorised User may only make one copy of the materials for their own personal use. You must also comply with the restrictions in clause 2.5.

If you would like to purchase additional rights please email info@risk.net

More on Basel Committee

FRTB implementation: key insights and learnings

Duncan Cryle and Jeff Aziz of SS&C Algorithmics discuss strategic questions and key decisions facing banks as they approach FRTB implementation

Basel concession strengthens US opposition to NSFR

Lobbyists say change to gross derivatives liabilities measure shows the whole ratio is flawed

Basel’s Tsuiki: review of bank rules no free-for-all

Evaluation of new framework by Basel Committee will not be excuse for tweaking pre-agreed rules

Pulling it all together: Challenges and opportunities for banks preparing for FRTB regulation

Content provided by IBM

EU lawmakers consider extending FRTB deadline

European Commission policy expert says current deadline is too ambitious

Custodians could face higher Basel G-Sib surcharges

Data shows removal of cap on substitutability in revised methodology would hit four banks

MEP: Basel too slow to deal with clearing capital clash

Isda AGM: Swinburne criticises Basel’s lethargy on clash between leverage and clearing rules

Fears of fragmentation over Basel shadow banking rules

Step-in risk guidelines could be taken more seriously in the EU than in the US