No silver bullet for AI explainability

No single approach to interpreting a neural network’s outputs is perfect, so it’s better to use them all

As artificial intelligence becomes more powerful, explaining the outputs of these models also becomes more challenging.

Deep learning techniques – and neural networks in particular – are playing an increasingly important role within financial institutions, where they are used to automate everything from options hedging to credit card lending. The outputs of these models are the result of interactions between the hidden layers of the network, which are often difficult to trace, let alone explain.

Efficiently interpreting these model outputs is not only necessary for financial institutions to build reliable and transparent models, but also to satisfy increasing regulatory scrutiny. “Regulators are looking into how automated decisions are made, and whether they have some biases that hadn’t been discovered before,” says Ksenia Ponomareva, global head of analytics at Riskcare, and one of the authors of Interpretability of neural networks: a credit card default model example.

Ponomareva, and Simone Caenazzo, a senior quant analyst at Riskcare, studied some popular approaches to explaining the outputs of neural networks.

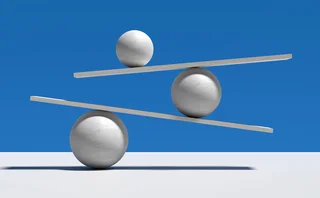

They conclude that none of the models considered in their study is always superior to the others, but rather, that each has its own particular strengths. Furthermore, because they provide different insights, the combination of several techniques may be more informative.

Three explainability techniques – relevance analysis, sensitivity analysis and neural activity analysis – are considered in the paper.

The first of these measures the relevance of each input variable used in a neural network. By aggregating the individual measures of relevance, it is possible to assess their marginal contribution to the output.

Sensitivity analysis measures how changes to input variables affect the output. This can help researchers identify which input variables influence the output the most and how changing the relevant input variables can affect the output.

Neural activity analysis is used to catalogue the paths in the neural network that are activated most frequently. This can highlight potential biases or inefficiencies in the data or in the network itself by detecting paths or nodes that are either activated very often or not at all.

Ponomareva and Caenazzo tested the approaches using a standard neural network and a credit card dataset popular with researchers in finance. This is a widely tested application of AI and is useful for assessing the information that each approach to interpretability is able to provide.

Each of the three techniques provided information that when collated presented a broader and clearer picture of how the output was obtained: relevance analysis showed that gender, education and marital status are significant factors in a default probability model; sensitivity analysis revealed the output is particularly sensitive to late payments; and neuron activity analysis provided some insight into whether candidates were being clustered in a consistent way by observing how they activate particular neurons.

“We found the neural network was sensitive to how late customers made payments,” says Ponomareva, “whereas other models were more punitive towards a certain age or gender groups, or the marriage status.”

Only users who have a paid subscription or are part of a corporate subscription are able to print or copy content.

To access these options, along with all other subscription benefits, please contact info@risk.net or view our subscription options here: http://subscriptions.risk.net/subscribe

You are currently unable to print this content. Please contact info@risk.net to find out more.

You are currently unable to copy this content. Please contact info@risk.net to find out more.

Copyright Infopro Digital Limited. All rights reserved.

As outlined in our terms and conditions, https://www.infopro-digital.com/terms-and-conditions/subscriptions/ (point 2.4), printing is limited to a single copy.

If you would like to purchase additional rights please email info@risk.net

Copyright Infopro Digital Limited. All rights reserved.

You may share this content using our article tools. As outlined in our terms and conditions, https://www.infopro-digital.com/terms-and-conditions/subscriptions/ (clause 2.4), an Authorised User may only make one copy of the materials for their own personal use. You must also comply with the restrictions in clause 2.5.

If you would like to purchase additional rights please email info@risk.net

More on Our take

The SaaSpocalypse shows private markets need risk models

Investors have little idea how bad the losses in private credit are going to be

Private credit disclosures leave more questions than answers

Muddled metrics and scattergun reporting hinder comparison of US lenders

Surcharge of the light-touch brigade

US reform of G-Sib surcharge goes well beyond simple update

Do banks still need to validate GenAI models?

Regulators carved out GenAI models from new risk guidance. Banks shouldn’t see this as a reason to stop validating them.

Iran confusion makes the case for causal modelling

A new test model built using Claude suggests oil prices may surge back above $100

Credit market maths seems not to add up

Today’s investors would appear to be better off buying ‘riskier’ debt

Has the Iran conflict made FX untradable?

FX options volumes jump despite high costs and short-lived opportunities

Can AI be the great equaliser in e-FX?

FX market-makers see real benefits for agentic AI in code generation and data analysis