We need a different approach to supervisory stress-testing

Confusing processes turn tests into template-filling exercise, says Garp’s Jo Paisley

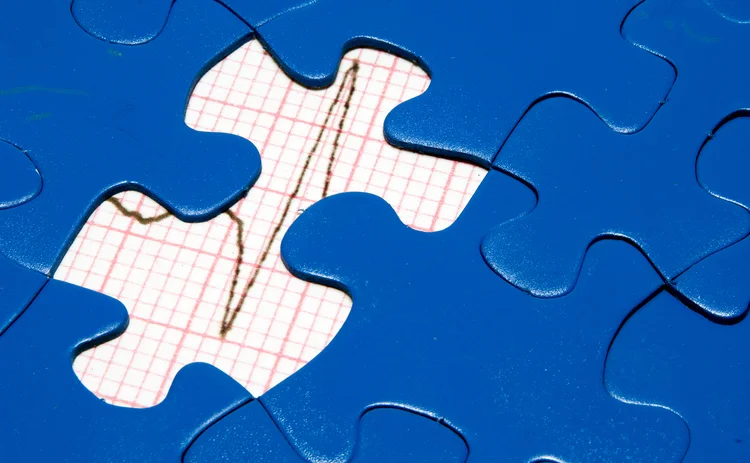

If you Google ‘stress test’, you’ll most likely see a person on a treadmill, hooked up to electrodes, with an ECG monitoring his or her heart. A stress test for a financial institution is similar: it puts the firm under strain and tests whether it is healthy enough to cope.

From a risk management point of view, stress-testing is a good thing. It’s forward-looking and provides insights into the

Only users who have a paid subscription or are part of a corporate subscription are able to print or copy content.

To access these options, along with all other subscription benefits, please contact info@risk.net or view our subscription options here: http://subscriptions.risk.net/subscribe

You are currently unable to print this content. Please contact info@risk.net to find out more.

You are currently unable to copy this content. Please contact info@risk.net to find out more.

Copyright Infopro Digital Limited. All rights reserved.

As outlined in our terms and conditions, https://www.infopro-digital.com/terms-and-conditions/subscriptions/ (point 2.4), printing is limited to a single copy.

If you would like to purchase additional rights please email info@risk.net

Copyright Infopro Digital Limited. All rights reserved.

You may share this content using our article tools. As outlined in our terms and conditions, https://www.infopro-digital.com/terms-and-conditions/subscriptions/ (clause 2.4), an Authorised User may only make one copy of the materials for their own personal use. You must also comply with the restrictions in clause 2.5.

If you would like to purchase additional rights please email info@risk.net

More on Comment

G-Sib capital surcharge: how indexing and averaging alter incentives

Capital risk strategist anticipates Basel III endgame impact on US big-bank behaviour

Podcast: Abi-Jaber and Li on a ‘sticky’ volatility problem

The pair discuss their model to jointly capture Vix, SPX and SSR

Markets perceive the future in very distorted ways

Discounting paradigms should adapt to be more realistic, says Jean-Philippe Bouchaud

Op risk data: Cyber hacks shake crypto protocols

Also: JP Morgan fined over investor losses; Symetra’s Methodist pensions mess. Data by ORX News

Prediction markets can be a canary in the coal mine

Prices of contracts on the likes of Polymarket can act as signals for risk management and hedging, says risk expert

How AI agents can join the dots for risk managers

Citi risk expert outlines agentic AI tool that would pull together structured and unstructured data on trading and lending approvals to create single, unified view of risk

Op risk data: Corporate spies spell trouble for BBVA

Also: BofA buttonholed for alleged Epstein links; minority shareholders take a bite of Brookfield. Data by ORX News

The rise of AI politics

AI should not be treated as just another technology, writes MAS adviser David Hardoon