This article was paid for by a contributing third party.More Information.

Why AI-related conduct risk is reshaping the business agenda

As conduct risks become faster, costlier and more difficult to predict, firms need risk intelligence that combines artificial intelligence scale with human judgement, says Alexandra Mihailescu Cichon, vice-president and global head of market development at RepRisk

Over the past decade, risk management in financial institutions has become more data-driven and automated. Digitisation accelerated, models became more complex and AI was positioned as a way to manage ever-growing volumes of risk data. Yet efficiency and sophistication have not always translated into greater foresight.

New evidence from The business conduct risk intelligence report 2026, based on a worldwide survey of more than 500 C-suite executives across banks, asset managers and asset owners, conducted by RepRisk and Oxford Economics, shows that the risk landscape has shifted both in substance and in structure – in ways that expose the limits of AI-only approaches and force a reassessment of how risk intelligence is produced and used.

From compliance risk to commercial risk

What stands out in the data is not simply that risks are increasing, but that they are evolving. The nature of business conduct risk is shifting in ways that challenge traditional assumptions about how it can be identified, assessed and managed.

As a result, business conduct risk is no longer treated as a peripheral compliance concern. It increasingly represents a material threat to commercial performance, balance sheet resilience and competitive positioning.

This shift matters because it raises the bar for what effective risk intelligence must deliver. When the downside is reputational discomfort, speed alone may suffice. When the downside is financial loss, then accuracy, explainability and defensibility become non-negotiable.

A new class of risk

The composition of risk is also changing. Traditional conduct risks – such as corruption, fraud or sanctions breaches – remain, but they are increasingly joined by fast-evolving exposures that do not fit neatly into historical taxonomies.

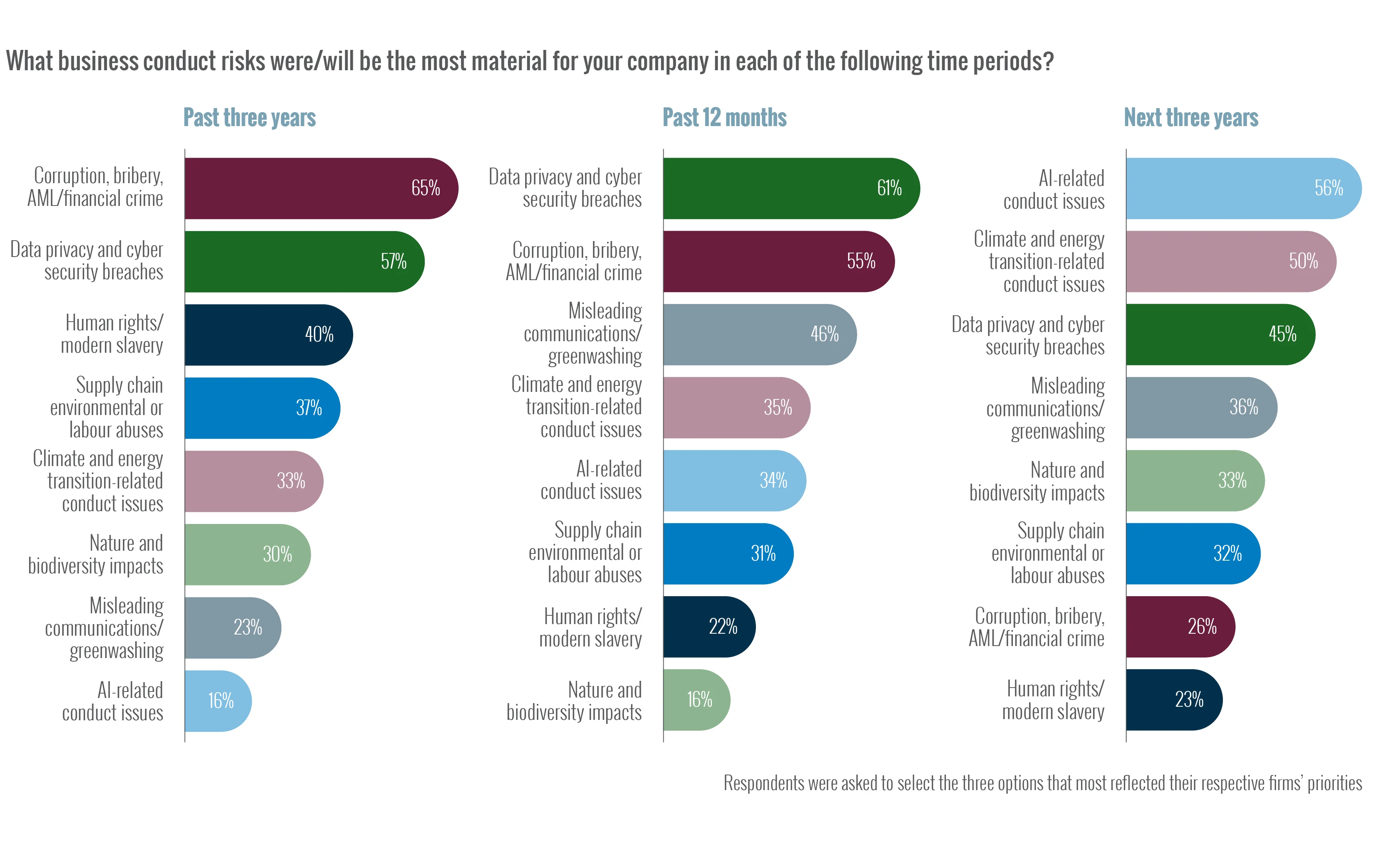

According to the report, material risks are shifting towards AI-related conduct risk, data integrity failures, and climate and energy transition-related issues. These are not static risks. They evolve across jurisdictions, technologies and business models, often faster than formal regulation can keep up.

Notably, executives expect AI-related conduct risk to rise sharply in the coming years. While only 16% of executives considered it material over the past three years, 56% expect it to be among the most significant risks over the next three.

This creates a paradox: AI is increasingly used to manage risk, yet is also emerging as a source of risk.

The illusion of pure AI

As complexity increases, many institutions have leaned further into automation – more data, more models and more machine learning. The report’s findings suggest, however, that senior decision-makers are becoming more cautious, not about AI’s usefulness, but about its ability alone to meet the evidentiary demands of due diligence and risk governance.

While nearly three-quarters of surveyed executives report using hybrid human-AI approaches, just 35% say they trust AI-only data for material investment and risk decisions. By contrast, 67% trust hybrid data that combines machine processing with human analysis.

The difference in confidence is telling. AI is effective at identifying patterns, but risk decisions rarely depend on patterns alone. They require judgement about context, intent, credibility and relevance – particularly in areas such as business conduct, governance and emerging regulatory focus.

Statistically sound outputs from a single AI model, however sophisticated, may often fall short in front of risk or investment committees, audit functions or regulators when one of the following questions inevitably arises: “Why did we miss this?” or “Why did we act on that?”

Decision-grade risk intelligence

What the surveyed C-suite executives appear to be converging on is a different standard: decision-grade risk intelligence.

This means data that is not only timely and comprehensive, but also traceable, explainable and defensible. The report reveals that concerns are significantly greater for AI-only approaches: 66% of executives cited false positives and negatives as a concern or major concern, while 62% pointed to a lack of transparency, explainability and auditability – versus 25% and 32%, respectively, for hybrid human‑AI approaches.

Crucially, this is not a rejection of AI. On the contrary, AI is essential to scale. But it is being repositioned, from autonomous decision-maker to an analytical engine operating within clear human governance.

In this framing, humans are not a bottleneck; they are guardians of accuracy.

Investment behaviour tells the same story

Changes in spending patterns reinforce this conclusion. Most firms have increased investment in business conduct risk data over the past year, but often reactively – with 58% reporting that spend increased after a major incident. Executives increasingly acknowledge that under-investment can raise long-term costs, suggesting a shift towards viewing risk intelligence as a strategic asset rather than discretionary spend.

This mirrors a familiar lesson from financial risk management more broadly: prevention is cheaper than remediation – but only if the data used to prevent is trusted enough to act on early.

While only 16% of executives considered it material over the past three years, 56% expect AI-related conduct risk to be among the most significant risks over the next three

What this means for financial decision-makers

For banks, asset managers, investors and insurers, the implications are significant.

First, risk models that optimise for speed at the expense of accuracy and explainability are increasingly misaligned with fiduciary reality. As conduct risks translate more directly into financial losses, the tolerance for ‘model said so’ decisions declines.

Second, firms using AI in investment and risk workflows must pay as much attention to governance architecture as to model performance. What is the source universe? Who validates outputs? How are edge cases handled? Can outputs be audited, traced and reproduced?

Third, the competitive advantage is shifting. The report’s data suggests that the top performers are not those with the most automation, but those that combine scalable technology with disciplined human judgement to identify risks earlier and respond faster.

In an environment where trust itself is becoming a scarce asset, investors who can explain their decisions, as well as defend them, will be better positioned to retain capital, regulatory confidence and strategic optionality.

The end of AI versus humans

The long-running debate over ‘AI versus humans’ is becoming outdated. The real question is whether institutions are building risk intelligence systems fit for a world in which risk is faster, less predictable and more costly than before.

The evidence points to a clear direction: automation is a force multiplier, not a substitute. When human insight remains firmly in the lead, technology becomes a catalyst for more transparent, trusted and defensible risk decisions.

Read the report

View the webinar

Catch up on the webinar exploring The business conduct risk intelligence report 2026, featuring insights from:

- Yohan Hill, Adams Street Partners

- Maria Lombardo, RO‑SE Global

- Max Vickers, Oxford Economics

- Moderator: Jenny Nordby, RepRisk

スポンサーコンテンツ

Copyright Infopro Digital Limited. All rights reserved.

As outlined in our terms and conditions, https://www.infopro-digital.com/terms-and-conditions/subscriptions/ (point 2.4), printing is limited to a single copy.

If you would like to purchase additional rights please email info@risk.net

Copyright Infopro Digital Limited. All rights reserved.

You may share this content using our article tools. As outlined in our terms and conditions, https://www.infopro-digital.com/terms-and-conditions/subscriptions/ (clause 2.4), an Authorised User may only make one copy of the materials for their own personal use. You must also comply with the restrictions in clause 2.5.

If you would like to purchase additional rights please email info@risk.net