This article was paid for by a contributing third party.More Information.

Applying risk technology and data in a ‘brave new world’

A recent report from FIS argues that insurers will benefit from adopting a flexible and innovative data management strategy – one they can use to continually adapt to inevitable changes in regulation and business intelligence requirements. This article further explores how firms can get true strategic value from their data.

The risk management landscape for insurers has radically shifted. Since the financial crisis, risk professionals have come under growing pressure from regulators and senior management for better information, and need to provide assurance that the company’s risks are well understood and properly managed. Senior executives in particular are looking for an enterprise-wide view of risk, as well as accurate up-to-date information about the use of capital by each business. On top of this, there is a growing community of external stakeholders ready to pore over insurers’ disclosures of their risk and solvency positions.

Actuaries and risk managers now play a pivotal role, and are being asked to produce more detailed reports, more quickly, and with greater transparency around the modelling process. Many are investing in technology to keep up with demand. But is there more to be gained than better productivity?

Optimising risk processes

Insurers have ploughed significant investment into their risk modelling and reporting processes in reaction to new regulations and through their Own Risk and Solvency Assessment (ORSA) initiatives. Efforts are clearly still continuing, but already the question has arisen: how can the business itself make the most of its already sizeable investment in new risk management processes and systems?

The first challenge is to incorporate the new processes into ‘business as usual’. The future of risk data management – a survey of risk professionals conducted recently by Insurance Risk and sponsored by FIS – found that 80% of firms will normally take anywhere between two months to over a year to embed processes that have been introduced for regulatory compliance. Encouragingly, a similar percentage of respondents said that the information created for the regulator can also be a benefit to the business.

A logical next step is to make these processes as effective and efficient as possible. Streamlined, automated processes help drive down costs and free up the experts to focus on what they do best – analyse risk results.

One way in which insurers are improving their processes is by turning to cloud-based solutions. Common drivers include reducing capital expenditure on in-house systems and taking advantage of the flexibility of virtual model environments and scaling processing to cope with variations in demand. Relatively cheap and rapid-access storage capacity, with the ability to access data from multiple locations is another plus. Insurers are trying to cope with ever-expanding volumes of data, including new data sources and outputs from the use of new advanced modelling techniques. Until recently, they had to rely, above all, on large, dedicated data centres, at substantial cost and with long lead times to add new capacity. The cloud is able to grow (and perhaps, more importantly, shrink) at very short notice, as needed.

Likewise, insurers are already taking advantage of leading business intelligence technologies to push risk-based information to an ever-expanding audience. This includes being pushed internally to, for example, underwriting teams, and also externally to the likes of regulators, rating agencies, analysts and investors. Clearly presented graphical reports are a simple, yet very effective, way of educating the stakeholder community about the risks the business is facing, and how those risks are managed.

Bridging to the strategic

Operational effectiveness is certainly valuable. But – as has long been argued – ultimately this does not help the firm strategically. Industrialised processes tend to converge to industry ‘best practice’ where firms perform similar activities and the emphasis falls on increasing speed of delivery, improving quality and reducing costs – the imperative of ‘faster, better, cheaper’.

These are critical needs, and technology providers must support the industry with solutions in these areas. However, operational excellence, as useful as this is, is not enough. It ultimately gives the company little room for manoeuvre if the processes in question become ‘hard-wired’, inflexible or ‘industry-standard’. New competitors, lacking the baggage of in-house legacy systems, are able to evolve faster as the business and regulatory framework develops, and will be able to model new risks more quickly, helping them bring new products to market faster.

The challenge is to combine operational effectiveness with flexible and innovative ways to convert risk data into unique risk insight – information that can help the company improve its strategic decision-making capabilities, and ultimately improve its return on capital.

What can help with this transition to true business value?

Building on existing processes and retaining flexibility

Actuaries and fellow risk professionals arguably have an excellent opportunity to profit from investments in ORSA and regulatory transformation projects, and to extend their data management capabilities. If data management processes remain flexible, it helps the firm adapt to the next series of regulatory waves without having to fundamentally re-engineer processes or systems. Investments in risk architectures for Solvency II should, for example, provide a basis to support compliance with the upcoming changes to the International Financial Reporting Standard (IFRS) for insurance contracts.

Furthermore, flexibility is important in terms of being able to capture, blend and display a range of different data types in response to new and changing requirements, especially where these requirements may be ambiguous. The exact interpretation of a regulation may change at very short notice in response to market feedback, either through a formal consultation exercise or perhaps because of a significant market event. The wider the audience for risk data becomes, the larger the pool of potential new requirements, as more people ask more questions about the data they are seeing. Risk management and reporting systems must support continual reconfiguration to meet these changes – for example, new products, changes to processes or business re-organisations – without losing control over the provenance of data presented to the business and the regulators.

The survey, mentioned previously, provides interesting insight into additional data requirements. Around three-quarters of those surveyed are looking to add economic scenario data, approximately two-thirds want to include credit risk data, and around a half aim to include additional asset data or reference data, such as mortality tables, from third parties.

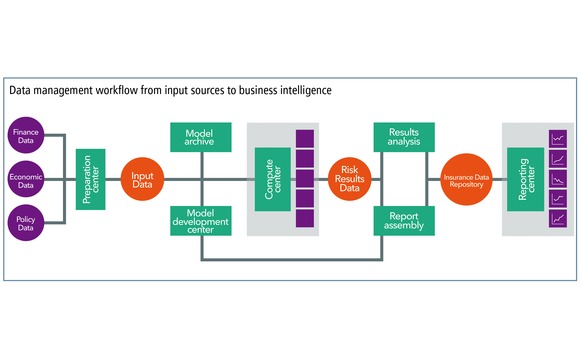

Exploiting a wealth of data

Actuaries may already be able to tap into underexploited data in the other systems in the company, such as details from core policy administration systems. Privileged access to internal data can be an important source of commercial advantage. They can apply their own techniques to blend actuarial and other data into clear visualisations of risk broken down by labels that are immediately meaningful to specific business audiences, for example, by business line or product type. Risk intelligence is no longer the sole preserve of the actuarial and risk functions – it has become an important shared internal resource. FIS’s efforts in this area have centred on the Prophet Data Management Platform, including the Insurance Data Repository that supports the collection and combination of data from a wide range of sources, mapping to business contexts and then displaying results with graphical business intelligence reports.

Another increasingly interesting and much-discussed area of technology is the concept of ‘big data’. This supports the notion of data as a strategically important resource, much of which still remains unexploited. The classical approach to data preparation is to push the data into a standard data model, such as a database, discarding whatever is not needed in the process. This approach works well, right up to the point where someone asks a question that can only be answered using the discarded data. The big data concept retains all the data and allows actuaries to re-query it at a later date to enrich the pool of data they are working with. Big data technologies provide an intriguing way to retain the value hidden in the detail.

Advantage through new insights

Looking at the developments in data collection – for example, the scanning of social media content, meteorological data, driving behaviour data, health-related data from wearable devices and data from mobile phone apps – it seems clear that one of the major distinguishing features of a competitive business is the data to which it has access. Other features of a competitive business are its ability to analyse this data for critical risk information, extract actionable business intelligence from it, and to then clearly demonstrate the effect of these actions using the company’s own data.

While the use of business intelligence is becoming more widespread within the industry, its importance in helping businesses profit from unique insights into their risks is still undervalued. No matter how good your risk models are and no matter how well controlled your data, unless the results are seen and understood by the people that need to, the effort is wasted. Often underrated, the ability to present data clearly, emphasising the key messages without swamping them with detail, is crucial to good business decision-making.

Operational proficiency can take you so far. But, by looking beyond this and helping the company exploit data to reveal new business insights, actuaries and other risk professionals can now lead the way through a brave new world of risk technology and data.

Read/download the article in PDF format

Read more about the research referred to in this article by downloading the full white paper, Facing new challenges: The future of data risk management in the insurance industry

Sponsored content

Copyright Infopro Digital Limited. All rights reserved.

As outlined in our terms and conditions, https://www.infopro-digital.com/terms-and-conditions/subscriptions/ (point 2.4), printing is limited to a single copy.

If you would like to purchase additional rights please email info@risk.net

Copyright Infopro Digital Limited. All rights reserved.

You may share this content using our article tools. As outlined in our terms and conditions, https://www.infopro-digital.com/terms-and-conditions/subscriptions/ (clause 2.4), an Authorised User may only make one copy of the materials for their own personal use. You must also comply with the restrictions in clause 2.5.

If you would like to purchase additional rights please email info@risk.net